The OQVA Work-in-Progress Pipeline

This is how we run AI agents to build ventures. It's not finished. Parts break, parts change, parts get replaced every week. We're publishing it anyway because the pipeline isn't the thing that makes us.

The Problem

Everyone working with AI already has a pipeline. They just don't call it that.

You open a chat, prompt an agent, get a result. Copy that result into another tool. Prompt another agent with new context. Get another result. Paste it somewhere. Review it. Adjust. Prompt again. Maybe you have three terminals open with Claude Code, each one working on a different piece, and you're the router — copying context between them, deciding what runs next, keeping track of what's done and what isn't.

That's a pipeline. It's just manual, it lives in your head, and nothing is recorded.

Two problems with that.

It doesn't scale. If a project needs ten tasks done in parallel, you're not opening ten terminals and babysitting ten agents. You'll do two or three at a time and bottleneck on yourself. > The agents aren't slow. You are.

It has no memory. When it's done, there's no record of what happened. Why was this decision made? What did the agent produce on the first pass? What did the reviewer flag? What changed between version one and version four? It's gone. Scattered across chat windows you already closed.

We wanted two things: automate the manual pipeline so agents work without us sitting in front of them, and keep full context of every decision, every review, every line of code changed and why.

That's what this is.

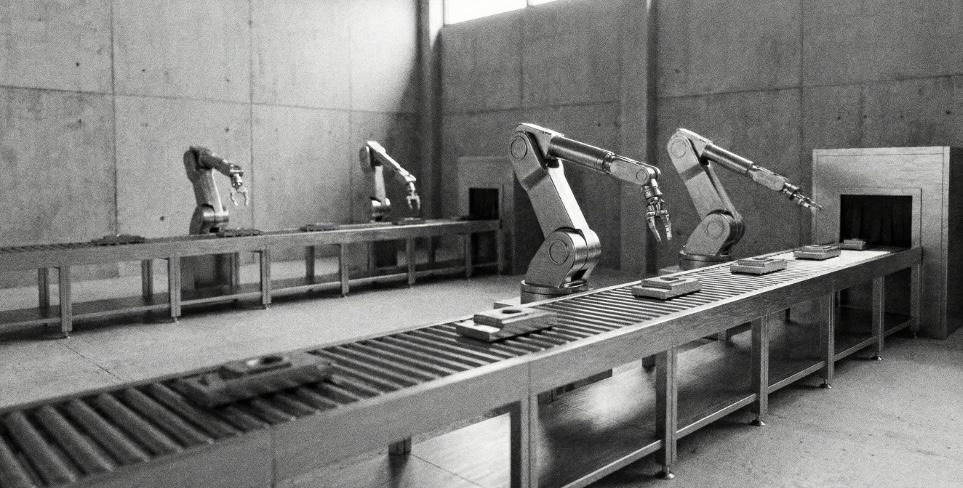

The Stack

Everything is self-hosted. Not for ideology — for control. We need to customize every layer, swap models when something better shows up, and not depend on anyone's pricing changes or API limits to keep our pipeline running.

- Plane — Project management. Every project, every task, every state transition lives here. We use Pages for long-form documents (research, brand guides, design systems) and Work Items for trackable tasks. Custom states act as pipeline stages — when a work item moves from one state to the next, things happen.

- n8n — Orchestration. Plane sends webhooks on every update. n8n filters the noise and triggers workflows on the state transitions that matter. It decides what runs when.

- Redis — Simple queue. n8n pushes jobs to Redis. Workers pull from Redis. That's it. No fancy job framework.

- Docker — Every worker is a container. Isolated, reproducible, disposable. Scale by spinning up more containers on more machines.

- Mac Minis + VPS — The physical layer. All machines listen to the same Redis queue. A job doesn't care if it runs on a Mac Mini under a desk or a VPS in Frankfurt.

- Claude Code + Local Models + Gemini — The agents inside the containers. Claude Code for coding tasks. Gemini for research and brand work. Local models like Kimi for specific tasks where we want speed or privacy. The model is a flag — swap it without touching the pipeline.

Projects, tasks, state transitions. Pages for long-form docs, Work Items for trackable tasks. Every action recorded.

Webhooks from Plane → workflows on state transitions. Decides what runs when.

Simple queue. n8n pushes jobs; workers pull. No fancy job framework.

Every worker a container. Isolated, reproducible, disposable. Scale by adding containers.

Physical layer. All listen to the same Redis queue. Job doesn't care where it runs.

Claude Code, Gemini, local models. The model is a flag—swap without touching the pipeline.

Because everything runs through Plane, every action is recorded. Every agent output is a linked Page. Every review is a comment. Every state transition is logged. Six months from now, we can open any work item and see the full history — what was generated, what was rejected, what was changed, and what the reasoning was at every step. The context doesn't disappear when you close a tab. It lives on the work item forever.

The Internal Pipeline

This is how a new venture moves from an idea to something that gets built.

Every venture starts as a single work item in Plane. That work item travels through a pipeline of states. Each state either triggers an AI worker, requires a human review, or both.

Venture pipeline states:

- New Idea → Paper Validation → Go / No Go → Conscious Contrast → Brand Verification → Design System & Copy → Design System & Copy Verification → Content And Traffic Loop → Validation Review → Design Creation → Design Creation Verification → Build or Recycle

How it works

When a work item enters a trigger state — say, "Conscious Contrast" — Plane fires a webhook. n8n catches it, parses the payload, and pushes a job to Redis with all the context the worker needs: the premise, the market analysis, any linked pages from previous steps.

A worker picks up the job. Inside the container, the AI agent runs the task — in this case, generating a brand coherence guide using our Conscious Contrast Framework. The output gets written back to Plane as a linked Page on the work item.

Then the work item moves to a verification state. A human reads the output. If it's good, they move it forward. If it's not, they add comments — either on Plane or directly on the document — and move it back. The worker picks it up again with the new context and iterates.

Every step adds linked pages to the work item: market research, CAC analysis, brand guidelines, tone of voice, copy direction. By the time a venture reaches "Build or Recycle," the work item has a full portfolio of documents attached to it — all generated by agents, all reviewed by humans, all traceable.

"Recycle" means we think the outputs need another pass through part of the pipeline. It's not failure. It's iteration.

It's a four-hands-plus-robots process. Humans—us and the founders—are in the loop at every verification step.

The Coding Pipeline

Once a venture reaches "Build," we need to ship software. Same infrastructure, different pipeline.

Here the Plane project is the actual product. Each work item is a coding task. We use TaskMaster AI to break down the full project scope into tasks with dependencies, so we can parallelize the work across multiple agents.

Coding pipeline states:

- Backlog → Ready for Dev → In Progress / Blocked / Needs Input → In Review → Review Approved → Merge → Done

How it works

When a task moves to "Ready for Dev," n8n pushes a job to Redis. A worker container picks it up. Inside the container: GitHub access and Claude Code. The job payload from Redis contains everything the agent needs — the task description, acceptance criteria, repo reference, branch conventions, and context from dependent tasks.

The agent clones the repo, creates a branch, writes the code, and pushes it.

The task moves to "In Review." A different worker picks it up — running a different model than the one that wrote the code. This is deliberate. If the same model codes and reviews, it tends to find its own output acceptable. A different model catches different things.

If the reviewer finds issues, the task moves back to development with the review comments. The dev agent picks it up again, addresses the feedback, pushes again. This loop continues until the review agent approves.

Once agents approve, a human does a final review. If it passes, it moves to "Merge." If not, we add comments on GitHub or Plane and send it back.

Dependencies and parallelism

Plane tracks relationships between tasks. If Task B depends on Task A, Task B stays in "Blocked" until Task A reaches "Merge." Once unblocked, it automatically becomes available for a worker.

Tasks without dependencies run in parallel. Multiple containers, multiple agents, multiple tasks — all at the same time. If TaskMaster breaks a project into forty tasks and ten of them have no dependencies on each other, ten agents pick them up simultaneously.

No one opens ten terminals. No one copies context between windows. The pipeline handles it.

The bottleneck is never "waiting for a developer." It's the dependency graph.

The Workers

A worker is a Docker container with a simple job: pull a task from Redis, execute it, push the result back.

What's inside a coding worker

- GitHub CLI (authenticated)

- Claude Code (or whatever model is configured)

- The job context from Redis (task description, repo, branch, dependencies, constraints)

That's it. The container doesn't know about Plane. It doesn't know about n8n. It doesn't know about other workers. It gets a job, does the job, reports back. Everything else is orchestration.

Every container is disposable. If something breaks, kill it and spin a new one. No state to preserve. No environment to debug.

We can scale horizontally by adding machines. Every new Mac Mini or VPS joins the same Redis queue. A worker in São Paulo and a worker in Frankfurt pull from the same pool. The pipeline doesn't care where the work happens.

What's Manual (For Now)

We're not pretending this is fully autonomous. Here's what still requires a human:

A human reads the AI output and decides if it moves forward. We're discovering which checkpoints we can trust to automate.

An AI can analyze a market. It can't decide if we should bet on it.

Every venture involves a real person with a real vision. The pipeline supports the conversation; it doesn't replace it.

The automated review catches a lot. The human review exists because we've seen agents approve code that works technically but doesn't belong structurally.

Every manual checkpoint is a question: "do we have enough confidence to remove this?" Some days we automate one. Some days something breaks and we add one back.

The pipeline changes every week.

Why Self-Hosted

Three reasons.

We've modified Plane for our pipeline states and integrated TaskMaster AI. You can't do that with a SaaS you don't control.

Today Claude Code and Gemini; tomorrow something else. The model is a flag in the job config. Swapping it doesn't touch the pipeline.

Containers on Mac Minis we own are cheaper than API calls at volume. Dozens of agents in parallel across ventures—the math matters.

Work In Progress

This pipeline is not finished. Every week we find something that can be faster, something that broke, something that a new model handles better than the one we were using.

If you're a technical founder and want to see how this works in practice, or if you're interested in building something together: get in touch.

Key takeaways

- The pipeline automates agent work and keeps full context in Plane; every decision and output is traceable.

- Self-hosted stack (Plane, n8n, Redis, Docker) gives control over customization, models, and cost at scale.

- Internal pipeline moves ventures from idea to build via trigger states and human verification; coding pipeline parallelizes tasks with dependency-aware workers.

- Workers are stateless containers; scale by adding machines to the same Redis queue.

- Humans stay in the loop at verification steps, Go/No Go, and cofounder conversation; the pipeline evolves weekly.